There is a TON of hype around ChatGPT. Let’s start with a basic definition. What is ChatGPT and why care? ChatGPT is a natural language processing tool driven by AI technology that allows you to have human-like conversations and much more with a chatbot. The language model can answer questions, and assist you with tasks such as composing emails, essays, and code. This type of technology has been around for a while but with AI and recent improvements, it’s becoming extremely effective at providing many use cases.

Why care in regard to cyber security? I can answer this with both a positive and negative outlook.

Positives of ChatGTP

There continues to be new use cases for this type of technology however, the following are some of the most interesting use cases.

- Language – Threat actors come from all parts of the global and language is often a barrier when performing threat research. I have been flagged many times for “not sounding local” and bounced from forums. ChatGPT could help remove this barrier opening up doors for threat research and collaboration with various global groups.

- Simplified coding – Higher levels of security maturity require automation and orchestration. There is just too much data and tasks to manually run a security operation center. One of the biggest challenges with automation and orchestration is coding aka DevOps. ChatGPT opens the door for people without coding skills to convert voice into code leading to simplified DevOps.

- Speed – The combination of other factors such as language barriers being broken down, simplified coding and other voice enabled automated tasks can dramatically improve the speed of delivering services.

- Threat evaluation: AI continues to bring exciting new ways to detect threats and ChatGPT can be grouped within that thought process. Malware is code so if ChatGPT can simplify understanding code, then it can also help identify potential malicious code.

Negatives of ChatGTP:

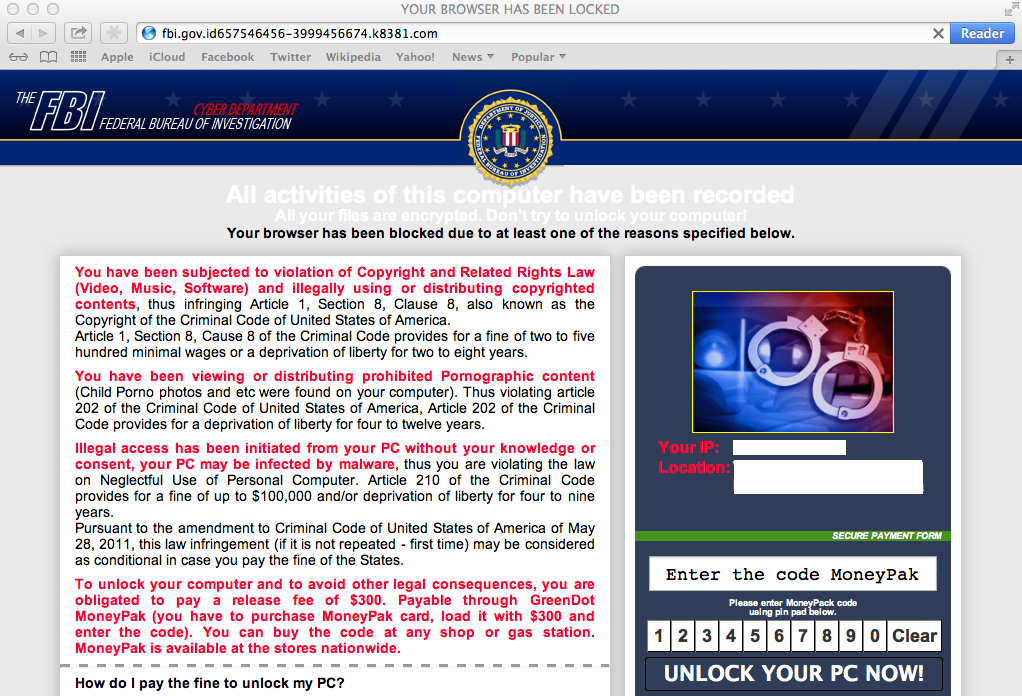

A lot of the positives can be flipped into negatives as well. There are rules in place for how ChatGPT is supposed to be used hence creating malware or phishing attacks are supposed to not be permitted however, we all know threat actors will find a way to abuse something that works regardless of the rules in place. Here are some examples of how ChatGPT in theory could be used by threat actors.

- Language – Threat actors come from all parts of the global and language is often a barrier when attacking targets. ChatGPT can clean up the common “broken language signs” found in phishing emails or other phishing related attacks making phishing a much bigger threat.

- Simplified coding – Threat actors can generate more complex malware using the same coding simplification concepts as any programmer could use.

- Speed – The combination of other factors such as language barriers being broken down, simplified coding and other voice enabled automated tasks can dramatically increase the number of new threat actors as well as volume of attacks.

- Creating Fake Profiles – Since language is no longer a barrier, ChatGPT can dramatically improve the quality of fake accounts and bot behavior making more unwanted noise and increasing targeted attack campaigns.

- Defense bypass – AI based code analysis can be used to help threat actors bypass defense tools faster and more effectively.

I want to stress that ChatGPT and other AI driven platforms don’t want their tools abused and will try to reduce malicious behavior. The unfortunate byproduct of any good thing is it can be abused and its best we all view the potential value of ChatGPT as both something that will have both a positive and negative impact in the cyber community. I relate this to the similar situation of when crypto currency was introduced. Many saw the value of what crypto currency meant to banking but it also made Ransomware become a huggggggge problem since threat actors could have an anomalous way to accept ransom payment. Hopefully, the positives of ChatGPT will outweigh the negatives. Only time will tell …..